LinkedIn now has more than 1 billion members across 200 countries (LinkedIn official figures, 2024). For anyone in B2B sales, recruiting, or growth, that database is the closest thing the internet has to a free contact directory. The catch is that “extracting” data from LinkedIn in 2026 is no longer one workflow. It is at least four, and picking the wrong one will cost you weeks, money, or a banned account.

This guide lays out the four methods in plain language, what each one can actually pull, what LinkedIn’s terms allow in 2026, and how to decide which approach fits your team. It is built for SDRs, growth marketers, recruiters, and founders who need clean LinkedIn data inside a working pipeline, not a research paper on web scraping ethics.

Chapter 1: What LinkedIn data extraction actually means

The phrase “LinkedIn data extraction” gets used loosely. Some people mean copy-pasting profiles into a spreadsheet. Others mean automated scrapers that crawl thousands of pages overnight. Others mean an enrichment API that returns structured JSON.

These workflows look similar from the outside (data leaves LinkedIn, lands somewhere usable) but they sit on very different legal, technical, and operational ground. Treating them as one thing is how teams end up with banned accounts, broken pipelines, or invoices they did not budget for.

The three things people usually want

When a sales or growth team says “we need to extract data from LinkedIn”, the underlying need is almost always one of three:

- Profile data at the lead level. First name, last name, headline, current company, role, location, languages, sometimes summary or skills. Useful for cold outreach personalization and CRM enrichment.

- Company data at the account level. Company name, LinkedIn URL, size, industry, headquarters, tagline, founding year, sometimes employee list. Useful for ABM, list-building, and intent monitoring.

- Contact details that LinkedIn does not show directly. Email and phone, found by inference or third-party data sources, then attached back to the LinkedIn profile.

Knowing which of the three you actually need narrows the right method considerably. A recruiter pulling 50 candidate profiles a day has different requirements than a growth team scoring 10,000 accounts per quarter.

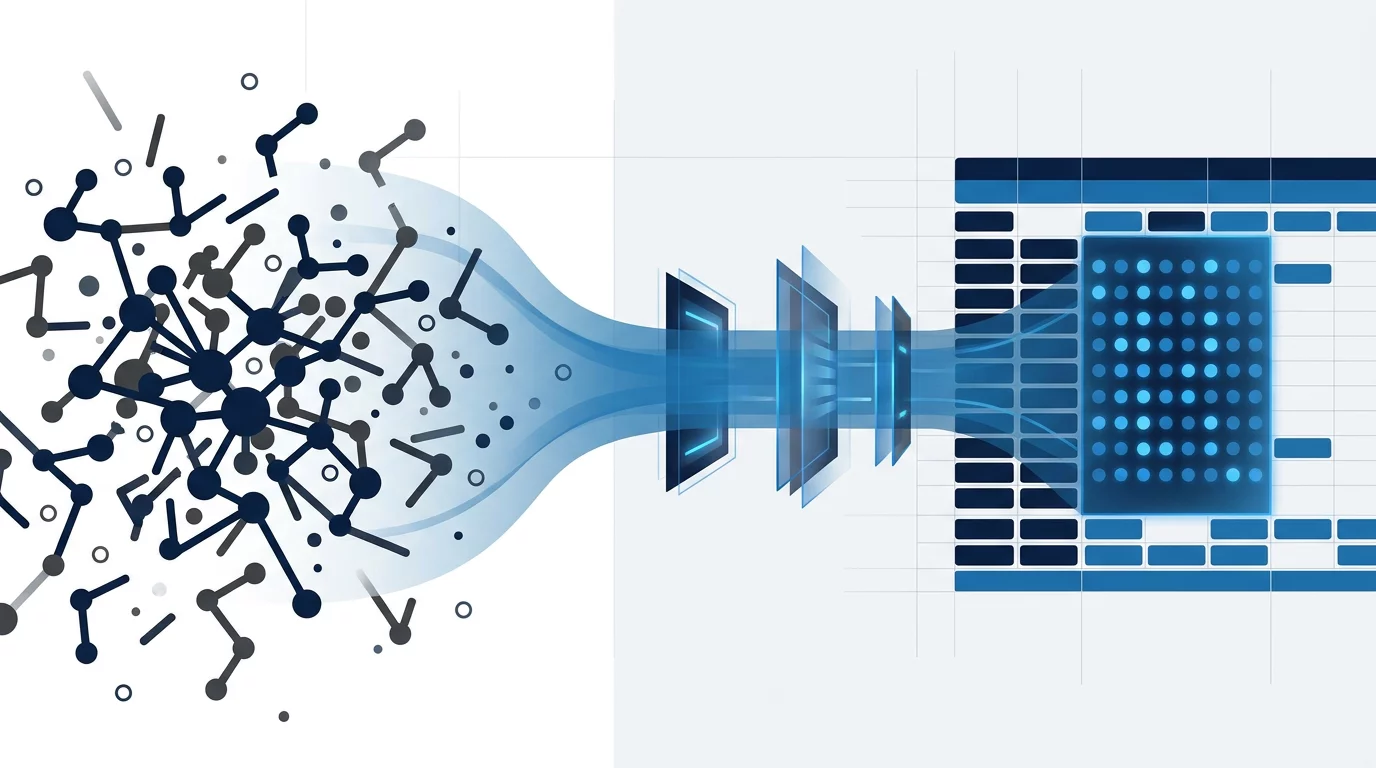

Scraping vs. API vs. native enrichment

A second clarification worth making before you pick a tool:

- Scraping means a script (or a browser extension, or a cloud bot) loads LinkedIn pages and parses their HTML. The data comes from LinkedIn’s UI directly. Fast, flexible, and the riskiest option in terms of account flags and terms-of-service exposure.

- API extraction means you query a third-party data provider that has already aggregated LinkedIn-style data from public sources, opt-in databases, and partnerships. You get clean JSON. No LinkedIn account is touched, but the data is one step removed from LinkedIn itself.

- Native enrichment means a tool that lives inside the surface you already use (Google Sheets, your browser, your AI assistant) and does the extraction quietly in the background, using a mix of API lookups and sometimes browser-side reads. From the user’s point of view, it is one click. From the data-flow point of view, it is closer to API extraction than scraping.

These three categories are the ones that matter. A “LinkedIn scraper” Chrome extension that runs from your own browser, logged into your own account, sits in a fourth in-between category that we will treat as a sub-flavor of scraping (because the legal and technical risk profile follows the scraping side, not the API side).

Key takeaways:

- LinkedIn data extraction usually means profile, company, or contact data

- The three real methods are scraping, third-party API, and native enrichment

- The official LinkedIn API exists but rarely covers prospecting use cases (more on this in Chapter 2)

- Picking the right method depends on volume, risk tolerance, and where your team already works

Chapter 2: The 4 methods, ranked

Here are the four methods most B2B teams will encounter in 2026, ranked from highest friction (and highest risk) to lowest.

Method 1: Browser-based scrapers

Tools that install as a Chrome extension or a desktop bot, log into LinkedIn under a real account (yours or a burner), and crawl pages on your behalf. PhantomBuster, Captain Data, Evaboot, Wiza, and a long tail of smaller scrapers fit this pattern.

What they do well:

- Pull data that no API provider has, like Sales Navigator search results or LinkedIn group members.

- Flexible. If LinkedIn shows it, a scraper can usually grab it.

- Cheaper per record at scale than third-party APIs.

Where they fall down:

- LinkedIn rate-limits and flags suspicious activity. A scraper running 500 profile views in an hour from one account triggers warnings within days.

- Requires giving the tool your LinkedIn cookie or session, which means a third-party cloud holds the keys to your account.

- Maintenance is constant. LinkedIn ships UI changes; scrapers break; vendors patch. A workflow that ran on Monday may fail on Friday.

Who should use them:

Growth ops teams with one dedicated LinkedIn account they are willing to burn, doing one-off list builds where the volume justifies the maintenance. Not the right fit for daily ongoing enrichment.

For a side-by-side breakdown of the major scrapers in this category, see our comparison of the 10 best LinkedIn scrapers in 2026. If you specifically want a Chrome-extension flavor of scraper that runs from your own browser, our 12 best LinkedIn scraper Chrome extensions in 2026 ranks the safe-for-account options.

Method 2: Third-party data APIs

Services that maintain their own LinkedIn-style database, refresh it from public sources and partnerships, and expose it as a REST API. The big names here are the data-broker tier (the ones that cost $2,000+ per month) and a middle tier of API-first enrichment services that charge per credit.

What they do well:

- No LinkedIn account needed. The legal and technical risk shifts to the provider.

- Reliable rate limits, documented endpoints, predictable pricing.

- Easy to wire into a CRM, n8n, Make, Zapier, or a custom backend.

Where they fall down:

- Coverage varies wildly by region and seniority. US enterprise data is usually deep; mid-market European data is often patchy.

- Data freshness is provider-dependent. Some refresh weekly; others quarterly.

- Per-record cost runs from $0.05 to $0.50 depending on the provider and the field. At 10,000 records per month, the bill scales fast.

Who should use them:

Engineering teams building enrichment into a product, RevOps teams piping data into a CRM, or any workflow where you need predictability over flexibility.

For a deeper look at the three categories of LinkedIn data extraction APIs (broker-tier, mid-tier, micro-API), what each returns and what each costs in 2026, see our breakdown of the LinkedIn data extraction API landscape.

Method 3: The official LinkedIn API

LinkedIn does publish an official API. In 2026, it is also probably not what you are looking for if you are reading this guide.

The API is split across product programs (Marketing Developer Platform, Sales Navigator API, Talent Solutions). Access requires a partner application, an approved use case, and in most cases a paid LinkedIn product subscription. The endpoints expose advertising data, member messaging, and Sales Navigator search results to approved partners, but they explicitly do not expose member profile data for cold prospecting at scale.

What it does well:

- Fully sanctioned by LinkedIn. Zero account-flag risk.

- Stable. Endpoints are versioned and supported.

- Real for ad-tech, ATS integrations, and some Sales Navigator workflows.

Where it falls down:

- The application process is slow. Weeks to months for partner status, and rejection is common for ambiguous use cases.

- Profile data for cold prospecting is not on the menu. Even Sales Navigator API access is gated behind enterprise contracts.

- Terms forbid combining LinkedIn API data with scraping outputs. If you mix flows, you violate the agreement.

Who should use it:

Companies building an integration LinkedIn would happily endorse: an ATS, an ad-tech platform, a CRM bidirectional sync that meets the partner program criteria. If you are an SDR trying to enrich a prospect list, the official API is not your path.

Method 4: Native enrichment

The newest category, and the one that has grown fastest in 2026. Native enrichment tools live inside the surface a user already works in, fetch data through a mix of API lookups and (in the case of the Chrome variant) browser-side reads on pages the user already opens, and write results back into the same surface.

The defining trait is that the user does not switch context. The tool is a sidebar in Google Sheets, an extension that wakes up on a LinkedIn page, an MCP endpoint your AI assistant can call, or an API endpoint your script already speaks to.

What native enrichment does well:

- Lowest friction of any method. One click, results appear in the workflow that was already open.

- No standalone tool to log into. The “tool” is a feature of the surface the team uses (Sheets, browser, Claude, ChatGPT).

- Cost scales with use rather than seat count. Pay-per-credit, not per-seat-per-month.

Where it falls down:

- Native enrichment is newer; ecosystem maturity varies. Some surfaces are solid (Google Sheets), others (MCP, Chrome extensions tied to AI assistants) are still firming up in 2026.

- The data still has to come from somewhere. Underneath the sidebar, the tool calls APIs (Method 2) or in some cases reads pages (Method 1). The legal and technical model of the underlying source still applies.

Who should use it:

Sales, growth, and recruiting teams who already live in Google Sheets, a browser, or an AI assistant, and want LinkedIn data inside that surface without picking up a new tool. Teams that need to enrich tens to thousands of leads per week without standing up an engineering project.

This is the method Derrick is built around. More on the specifics in Chapter 6.

Key takeaways:

- Browser scrapers are flexible but expose your LinkedIn account to risk

- Third-party APIs are reliable but priced per record, which adds up

- The official LinkedIn API rarely covers prospecting needs in 2026

- Native enrichment is the lowest-friction option when you live in Sheets, a browser, or an AI assistant

Chapter 3: What you can actually extract

Different methods unlock different fields. Here is the practical map of what is reachable in 2026, organized by data type.

Profile-level data (one LinkedIn profile in, structured fields out)

| Field | Available via | Notes |

|---|---|---|

| Full name | All methods | Trivially available |

| Headline | All methods | The 220-char tagline at the top of the profile |

| Current company | All methods | Resolved to a clean LinkedIn company URL by good tools |

| Job title | All methods | Sometimes split into title + seniority |

| Location | All methods | City + country usually; some providers add postal code |

| Languages spoken | Scraping + native | Less common in third-party APIs |

| Skills | Scraping + native | Often noisy because users tag liberally |

| Summary / About | Scraping + native | Long text, useful for AI scoring |

| Education + work history | Scraping + native + some APIs | Premium feature on most paid tiers |

| Native + APIs (waterfall sources) | Found by inference, not extracted from LinkedIn directly | |

| Phone | Native + APIs (waterfall sources) | Same; quality varies wildly by region |

| Profile picture | Scraping + native | URL to the LinkedIn-hosted asset |

For a deeper walk-through of pulling specific profile fields, see our guide on how to extract company info from a LinkedIn profile URL and the workflow for finding a LinkedIn profile by full name.

Company-level data (one LinkedIn company URL in, structured fields out)

| Field | Available via | Notes |

|---|---|---|

| Company name | All methods | |

| LinkedIn company URL | All methods | The canonical handle |

| Industry | All methods | Standard NAICS-like categories |

| Employee count | All methods | Usually a banded range, sometimes exact |

| Headquarters location | All methods | |

| Founded year | Scraping + native + some APIs | |

| Tagline | Scraping + native | Short pitch under the company name |

| Specialties / topics | Scraping + native | Often noisy |

| Website URL | All methods | |

| Tech stack | Native + dedicated APIs | Usually a separate enrichment step (BuiltWith, Wappalyzer-class data) |

| Funding rounds | Native + dedicated APIs | Crunchbase-class data, attached on request |

| Employee list | Scraping + dedicated APIs | The hard one; LinkedIn rate-limits this aggressively |

If you specifically need the employee list, our guide on how to find a company’s employees on LinkedIn covers the trade-offs.

Contact data not visible on the profile itself

Email and phone are the two contact fields most teams chase. Neither is “extracted from LinkedIn” in the literal sense (LinkedIn does not expose them publicly). What good tools do is take the LinkedIn profile as input, then run a waterfall of public sources, opt-in databases, and verification providers to return a likely email or phone, with a confidence score.

Quality is not 100% on either. It is no tool’s fault; the underlying data is patchy and decays at roughly 25% per year for emails (HubSpot State of Marketing reports). What you should expect from a serious tool:

- An email-found rate of 40-70% on B2B lists, depending on seniority and region

- A phone-found rate of 15-40% on the same lists

- A confidence or verification score so you know which results to trust

For the phone-finder workflow specifically, our breakdown on how to find phone numbers from a LinkedIn profile URL walks through the credit cost, the verification step, and the realistic hit rate.

For the email side, our comparison of LinkedIn email finder tools in 2026 ranks the major options by hit rate, pricing, and bulk workflow.

Engagement and reach signals (when they are exposed)

A category that has grown in 2026: surface-level engagement signals attached to profiles and companies. Follower count, connection count, posting activity. These are useful for ABM scoring (a company that grew its follower count by 30% in a quarter is signaling something) and for outreach personalization.

LinkedIn does not expose these uniformly. Public profiles show some; logged-in views show more. Tools that pull these signals usually do so through a Chrome-side read or a dedicated micro-feature. See our LinkedIn followers and connections count feature for the live workflow.

Key takeaways:

- Profile fields, company fields, and contact details are three distinct extraction problems

- Email and phone are inferred, not extracted directly from LinkedIn

- Realistic email match rates are 40-70%, phone 15-40% on B2B lists

- Engagement signals like follower count are increasingly useful for ABM scoring

Chapter 4: The legal and technical limits LinkedIn enforces in 2026

This is the chapter that ages the fastest. Two things have stayed broadly stable since 2023, but the details shift quarter by quarter.

Related read →Best LinkedIn Scrapers 2026, compared

Pricing, limits, and account-flag risk across 10 popular scrapers in one table.

What the LinkedIn User Agreement says about extraction

LinkedIn’s terms of service prohibit “scraping” and “automated access” to the platform. The relevant clauses have been in place since at least the early 2010s. In practice, three categories of activity are explicitly forbidden:

- Using bots, scripts, or automated tools to copy data from LinkedIn pages

- Reverse-engineering or bypassing technical limits LinkedIn enforces

- Storing copies of LinkedIn member data for resale

Enforcement against individual scrapers happens through account flags, suspensions, and in extreme cases legal action. The most cited case is hiQ Labs v. LinkedIn (Ninth Circuit, multiple rulings between 2017 and 2022), where a court initially ruled that scraping public LinkedIn profiles did not violate the Computer Fraud and Abuse Act. The case eventually settled, and hiQ shut down. The legal picture remains contested. Public-page scraping is not automatically a federal crime in the US, but it is a TOS violation, and that matters for civil action and account access.

In the EU, the GDPR adds another layer. Even if scraping is technically possible, processing personal data without a lawful basis (legitimate interest, contract, consent) exposes you to fines that can reach 4% of global turnover. For B2B prospecting, “legitimate interest” is usually defensible if you have a clear opt-out, retention policy, and proportionality argument. For consumer-facing scraping, it is much harder.

Practical rate limits LinkedIn enforces

Beyond the legal layer, LinkedIn applies technical rate limits. These are not officially published but have been documented through community testing and tool-vendor experience:

- Profile views per day: roughly 80-100 for a standard account before warnings appear. Sales Navigator allows more.

- Search results visible: the Sales Nav search is the deepest, but even there results cap at 2,500 for any single query. Scrapers that paginate beyond this hit empty pages or CAPTCHA.

- Connection requests per week: dropped from 100 to 20 in late 2021, with adjustments since. Not a scraping limit per se, but a key one for outbound flows.

- API rate limits (for approved partners): documented per endpoint, usually 100 requests per minute per app token, with daily caps.

A scraper that ignores these limits will get the LinkedIn account flagged within days. A scraper that respects them will be slow, which usually defeats the original reason for using a scraper.

What native enrichment changes legally

Native enrichment tools that use third-party APIs underneath sit outside the LinkedIn TOS conversation entirely. The data is sourced from public sources and opt-in databases held by the API provider, not pulled from LinkedIn pages in real time. The legal exposure shifts to the API provider’s data licensing, which a serious provider has worked out with their own legal team.

Native enrichment tools that include a Chrome-side read step are in a softer category. They run in the user’s own browser, logged into the user’s own LinkedIn account. They do not give a third-party cloud the user’s session cookie. The legal risk is closer to “what one user can do manually” than to “what an automated cloud bot does at scale.”

Quick decision matrix on legal risk

| Method | LinkedIn TOS exposure | GDPR exposure | Practical account risk |

|---|---|---|---|

| Cloud scraper with stored cookie | High | High | High |

| Self-hosted scraper, your own account | Medium | High | Medium |

| Third-party data API | None directly | Medium (provider’s responsibility) | None |

| Official LinkedIn API | None | Medium | None |

| Native enrichment (API underneath) | None directly | Medium | None |

| Native enrichment (Chrome-side read) | Low | Medium | Low |

Treat the table as a guide, not legal advice. If your use case is high-volume or consumer-facing, talk to a privacy lawyer before committing to a method.

Key takeaways:

- LinkedIn’s TOS forbids automated extraction; enforcement is via account flags and civil action

- The hiQ vs LinkedIn ruling makes the public-page scraping picture less clear-cut, but TOS still applies

- Practical rate limits cap a standard account at ~80-100 profile views per day

- API and native enrichment shift the LinkedIn TOS exposure off the user, onto the data provider

Chapter 5: How to pick the right method

Six questions, in order. Answer them and the right method falls out.

Question 1: How many records per month?

- Under 500: most teams can do this manually, in a sheet, with a free or low-cost native enrichment tool. Method 4.

- 500 to 10,000: native enrichment in Sheets, an API plug, or a Chrome extension. Method 4 (or Method 2 if engineering resources are dedicated).

- 10,000 to 100,000: third-party API, possibly combined with a native layer for the team-facing workflow. Method 2 (with Method 4 wrapper).

- 100,000+: data broker tier or in-house data partnership. Out of scope for this guide.

Question 2: What is your tolerance for account-flag risk?

If a LinkedIn account suspension would meaningfully hurt your business (founder personal brand, recruiter sourcing, sales team’s outreach reputation), avoid Method 1. The value of the LinkedIn account itself outweighs the cost savings of a scraper.

If you have a burner account dedicated to extraction work and you can replace it cheaply, Method 1 stays on the table.

Question 3: Where does the team already work?

This is the question most teams skip and it is the highest-impact one.

- If the team lives in Google Sheets, native enrichment via a Sheets sidebar wins almost every time. The marginal cost of adopting a new tool drops to zero because it is not a new tool, it is a Sheets feature. See our walkthrough on how to extract LinkedIn data into Google Sheets for the practical setup.

- If the team lives in a CRM (HubSpot, Salesforce, Pipedrive), an enrichment workflow that exports to Sheets and then imports to the CRM is one extra step. Not painful but worth budgeting.

- If the team uses an AI assistant heavily (Claude, ChatGPT), an MCP endpoint that the assistant can call directly skips the spreadsheet step entirely.

- If the team is engineering-heavy, a third-party API plugged straight into the product or backend is the natural fit.

Question 4: How fresh does the data need to be?

- Real-time (extraction at the moment of outreach, e.g., right before sending a cold email): Methods 1 and 4-Chrome are the only options. Third-party APIs lag by days to months.

- Daily: Method 4 with API underneath is enough.

- Weekly or slower: any method works.

Question 5: What is the budget per record?

- Under $0.02 per record: Method 1 (scraper) or a heavy-tier API contract.

- $0.02 to $0.10: most native enrichment tools and standard API tiers.

- $0.10 to $0.50: premium APIs with high coverage.

- $0.50+: data broker tier with proprietary fields.

Question 6: Do you need engineering capacity to maintain the flow?

If the answer is no, scratch Method 1 (scrapers break and need maintenance) and Method 2 in its raw form (API integrations need engineering). That leaves Method 4. This is the most common reason mid-sized teams move to native enrichment in 2026.

Putting it together

A typical small B2B team in 2026 (5-25 people, sales-led, no dedicated data engineer) lands on Method 4 by default. A growth-stage SaaS with a RevOps function usually combines Method 4 (team-facing) with Method 2 (CRM-facing, automated). An enterprise with $2,000+ per month of data budget is often on Method 2 directly with a data-broker contract.

Browser scrapers (Method 1) remain in the toolkit for one-off list builds and edge cases (LinkedIn group scraping, Sales Nav search export beyond the API limits), but rarely as the primary daily workflow.

Key takeaways:

- Volume, account-risk tolerance, and where the team works are the three biggest decision inputs

- Sub-10,000 records per month with no engineering team usually means native enrichment

- 10,000+ records per month with engineering capacity usually means third-party API

- Browser scrapers fit one-off list builds, not daily workflows

Chapter 6: The Derrick approach to LinkedIn data extraction

Derrick is a native enrichment tool. The product philosophy is that LinkedIn data should arrive where you already work, not in a new dashboard you have to learn. In practice, that means four surfaces:

Google Sheets sidebar (the original)

Open any Google Sheet. Open Derrick from the Extensions menu. The sidebar shows the feature catalog as clickable tiles. Pick the column that contains LinkedIn URLs. Click the action. The enriched data writes into the same sheet, in new columns.

This is not a formula library. There is no =DERRICK_ENRICH() to memorize. It is a full sidebar app, and the UX is closer to opening a feature in Notion or Figma than writing a spreadsheet formula.

Workflows that fit:

- A sales team list-building 200-2,000 leads per week

- A recruiter sourcing 50-200 candidate profiles per day

- A growth team scoring 5,000 accounts per quarter against ICP criteria

Free plan includes 100 credits per month (1 credit per lead enrichment, 5 per email found, 150 per phone found). Paid plans start at $9 per month for 4,000 credits.

Chrome extension (one-click prospecting)

For the workflow where the user is already on LinkedIn, looking at a profile or a search result, the Chrome extension wakes up and pulls the profile or list into the team’s Sheet (or sends it through to an API endpoint, depending on the plan).

Use case: a founder who scrolls LinkedIn for inbound demand, spots a relevant profile, and wants that profile in the CRM-bound enrichment list without ten clicks. One click in Chrome, the profile lands in the right Sheet.

Open API (for builders)

Available from the Standard plan ($20 per month minimum). The API exposes the same enrichment capabilities the sidebar uses, with versioned endpoints, predictable rate limits, and OAuth authentication. Documentation lives at https://app1.derrick-app.com/api/v1/docs/.

Use case: an engineering team piping enrichment into a backend, an n8n or Make automation, or a custom CRM connector.

MCP endpoint (for AI assistants)

The newest surface. An MCP server endpoint that any MCP-compatible AI (Claude Desktop, ChatGPT, others) can call to enrich profiles, find emails, find phones, and search LinkedIn URLs. The AI assistant becomes the team’s enrichment runner. Available from the Standard plan ($20 per month minimum).

Use case: a founder or RevOps person asking their AI assistant “find the head of sales at these 30 companies” and getting LinkedIn URLs and headlines back without leaving the chat.

For the full setup walkthrough, the 3 flavors of LinkedIn scraper MCP (browser-automation, API-based, native enrichment), and the LinkedIn TOS section 8.2 implications, see our deep-dive on the LinkedIn Scraper MCP guide for 2026.

How Derrick fits in the legal picture

Derrick uses third-party data APIs and the user’s own browser session for enrichment. It does not ask the user to paste their LinkedIn cookie into a third-party cloud, which is the most common single risk vector for browser scrapers. The user still needs a working LinkedIn or Sales Navigator account; Derrick is not a workaround for that.

Realistic expectations on data quality, drawn from 31,000+ users in 2026:

- LinkedIn URL match rate from a name + company input: 80-90%

- Email-found rate on B2B lists: 50-70%

- Phone-found rate: 25-40%, with regional variation

Failed lookups do not burn credits where the source supports it.

Compare the alternatives →The 10 best LinkedIn scrapers in 2026

Pricing, limits, and use-case fit for the major scrapers, ranked side by side.

Key takeaways

- LinkedIn data extraction in 2026 splits into 4 methods, each with a different risk and friction profile

- Browser scrapers are flexible but expose your LinkedIn account; reserve them for one-off list builds

- Third-party APIs are predictable; budget $0.05-$0.50 per record at scale

- The official LinkedIn API rarely covers prospecting use cases

- Native enrichment (Sheets, Chrome, API, MCP) is the lowest-friction path for most B2B teams in 2026

- The right method falls out of three questions: volume, account-risk tolerance, where the team already works

Conclusion: where to start this week

If you are reading this guide to pick a method, the fastest test is the cheapest one: open Google Sheets, install a native enrichment tool, run 50 leads through it, and see whether the workflow feels like work or like one click. If it feels like one click, you have your answer for the next 1,000 leads. If it does not, you know the friction and can shop the other methods accordingly.

The mistake most teams make is picking the method first (often a scraper, because it sounds “powerful”) and then noticing six weeks later that the team only ran it twice because logging into a new dashboard was friction. The method that fits the workflow you already have is the one that gets used.

FAQ

Is it legal to extract data from LinkedIn in 2026?

The legal answer depends on the method and the jurisdiction. Public-page scraping of LinkedIn was found by the Ninth Circuit (hiQ Labs v. LinkedIn) not to violate the US Computer Fraud and Abuse Act, but it does violate LinkedIn’s terms of service. In the EU, GDPR adds a layer about lawful basis for processing personal data. Third-party APIs and native enrichment that source data from opt-in databases sit outside the LinkedIn TOS conversation directly.

Can I extract LinkedIn data without a LinkedIn account?

Not entirely. Most extraction methods, including native enrichment, still require a working LinkedIn account on the user’s side, especially for any browser-based step. The actual differentiator across tools is whether you have to hand your LinkedIn cookie to a third-party cloud (high risk) or whether the tool runs in your own browser session (low risk).

How accurate is LinkedIn data extraction in 2026?

Profile-level data (name, current company, headline) is usually 90%+ accurate from any serious tool. Email-found rates run 40-70% on B2B lists. Phone-found rates run 15-40%. No tool delivers 100% accuracy; treat any vendor that claims that as a red flag.

How much does it cost to extract LinkedIn data?

Per-record cost runs from $0.02 (heavy-tier API contracts, scraper outputs) to $0.50 (premium APIs with proprietary fields). Native enrichment tools price by credits: $9 per month for ~4,000 credits is a typical entry tier in 2026.

What is the difference between scraping LinkedIn and enriching from LinkedIn?

Scraping means a script or extension reads LinkedIn HTML pages directly. Enriching means a tool takes a LinkedIn URL or name as input and returns structured data sourced from a mix of LinkedIn-adjacent public sources, opt-in databases, and verification providers. Enrichment is the safer, more sustainable path for ongoing workflows.

Can I get a list of all employees of a LinkedIn company?

Yes, with caveats. Tools that pull employee lists either rely on Sales Navigator search exports (capped at 2,500 results per query) or on third-party databases that have aggregated employee data. Coverage is best for US enterprise companies, patchier for SMB and mid-market in Europe. See our guide on finding a company’s employees on LinkedIn for the practical workflow.

Will my LinkedIn account get banned if I use an extraction tool?

If you use a cloud scraper that holds your session cookie, the risk is real and rises with volume. Accounts running 500+ profile views per day typically see warnings within a week. If you use a native enrichment tool that runs in your own browser at human-like rates, the practical risk is close to manual browsing. Third-party API tools never touch your LinkedIn account at all.